Data is everywhere. Whether you are an individual, a small company, or a multinational firm, you must be dealing with a lot of data including customer data needed to cater to customers and improve your bottom line.

Marketers use a variety of techniques to increase profits. Understandably, not all techniques may work or not all may be equally effective.

You can’t create a campaign based on hunch or feelings. You need numbers, but they may not always be clear. This is why businesses need A/B testing, a unique method that helps businesses choose the right route.

In this article, we’ll talk about A/B testing and highlight its benefits while also highlighting some of the best A/B testing software.

Let’s start:

What is A/B Testing?

A/B testing can be defined as a method to compare two options used to achieve the same thing to find out the one that offers better results.

We use A/B testing almost every day and the technique is said to be over 100 years old. However, it’s now becoming more popular thanks to the introduction of online marketing. Marketers use A/B tests to compare two marketing methods to find the one that offers the best return on investment; however, that’s not the only use of A/B tests.

Biologist and statistician Ronald Fisher randomized controlled experiments in the 1920s. He figured out the basic mathematics and principles and turned this idea into a science.

Fisher ran several agricultural experiments to find answers to basic questions like what happens if I switch fertilizers or use more fertilizer.

The principles that he introduced turned out to be true and scientists officially started to run clinical trials in the early 1950s in the field of medicine.

Marketers adapted the technique in the late 1960s. They want to evaluate direct campaigns, i.e.: if personalized letters or postcards offer more sales.

However, A/B testing wasn’t the same back then. It came to its current form in the mid 1990s. It uses the same concepts but has moved to a virtual environment and in real-time.

What are The Benefits of A/B Testing?

Now that you know the A/B testing definition, it’s time to look at the main advantages of AB testing.

Saves Money

A/B testing allows businesses to save money by identifying processes that offer better returns. No two marketing campaigns will offer similar returns, one will always somehow be better than the other.

With the help of A/B testing data science, businesses can find the option that offers better returns and get rid of the process that offers lower returns and spend the money where it pays more.

Increases Profits

As highlighted in the AB testing definition, it helps increase profits by improving conversions and allowing the business to reach more people. About 60 percent of businesses believe it helps improve conversion.

In addition to this, A/B test results can improve bounce rates and increase engagement. These factors are important to help a business grow. At the end of the day, business begins to make more money due to reduced costs and increased sales.

Helps Identify Issues

A lot of marketing campaigns fail due to small errors. Best AB testing tools can recognize these errors so that a business can run smoothly.

It can help identify a lot of problems such as poor UX design. This is important because a better design can increase conversion by up to 400 percent.

Improves Content

Despite what everyone says, content still rules. The problem, however, is that there are a lot of options to choose from including written content, visual content, etc.

You can’t always be sure what’ll work and what wouldn’t unless you have reliable A/B testing data analysis.

Good for Business Image

A/B testing has become very popular and over 70 percent of companies run at least two tests a month. A/B testing for websites allows businesses to get rid of processes or steps that leave a bad customer impression.

As a result, the image gets a boost and goodwill increases.

Makes Analysis Easier

About 77 percent of businesses run A/B tests on their websites (including landing pages) to identify design, font, and other such issues.

This helps reduce cart abandonment by highlighting what causes buyers to abort a cart. There can be a variety of reasons such as a poor layout, hidden costs, etc.

With A/B testing, businesses can find the real cause and work on it.

More Engagement

Companies look for engaged followers and buyers, hence it doesn’t come as a surprise that 59 percent of businesses run A/B tests on emails. It can help businesses identify what kind of content works more so they can concentrate more on it.

A/b testing has been a critical component of my marketing strategy over the years. By constantly testing different marketing elements, both in terms of copy and design, Plixpay have been able to consistently improve the response rates for my campaigns. Whether it’s optimizing my subject lines or tweaking the layout of an email newsletter, a/b testing helps me to get the most out of every campaign that I run.

How Does A/B Testing Work?

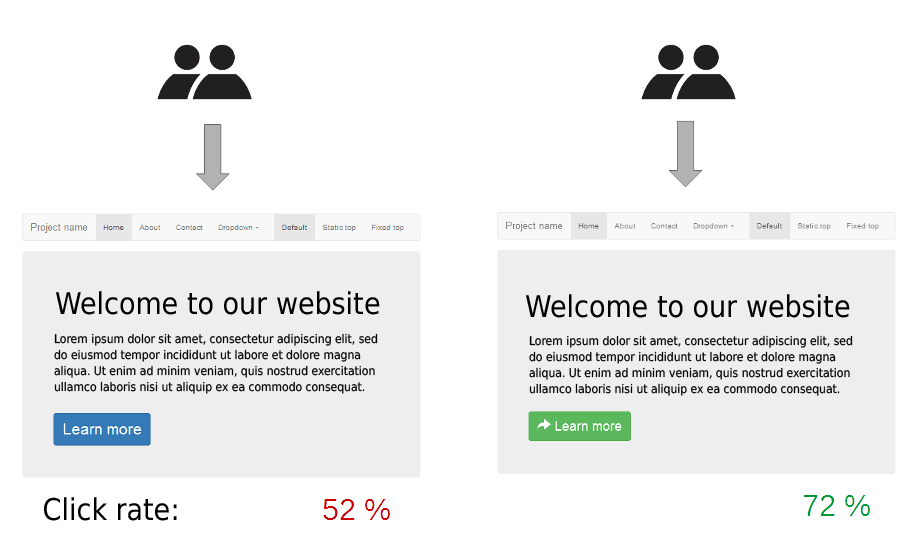

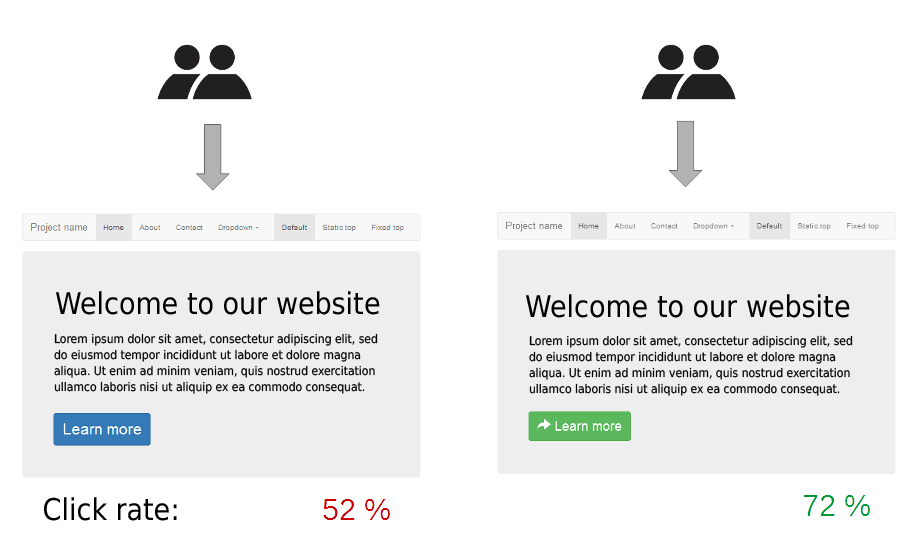

A/B testing might sound like a complex phenomenon but it’s actually very simple. The first step is to decide what you wish to test and why.

Let’s say you wish to test the size of the ‘Buy Now’ button on your site to see how many people ‘buy’ if you change the size, i.e: make it bigger or smaller. Once you’re clear of what you wish to test, you need to be sure about how you’re going to evaluate performance.

How many people click on the button, for example, can be a good indication of how the size of the button impacts perception.

You can also use the number of final buyers to make a judgment but that may not be a fair option because visitors may abandon a purchase due to other reasons as well.

In the next step, you will have to divide users into two sets. The set must be random unless you’re trying to study how uses from a specific demographic react to a change.

Next, create two similar pages but with different button sizes. Now, look at the analytics and see which page gets more clicks.

The decision to click depends on several factors such as the size of the button, the color of text, the device one is using. For clarity, you can divide your users into specific groups, i.e: mobile users and desktop users.

This is because the same button may appear different to mobile users and different to desktop users. This way you will be able to know which button to serve to specific users.

“The A/B test can be considered the most basic kind of randomized controlled experiment,” says Kaiser Fung, the man behind several books including Number Sense: How to Use Big Data to Your Advantage.

“In its simplest form, there are two treatments and one acts as the control for the other,” he adds. Make sure to correctly estimate the size of your sample so that the result is correct and not due to background noise.

Some other variables can affect results. For example, mobile users may dislike clicking buttons or the button may not be positioned correctly on the desktop version of your website.

Randomization can cause one set to contain more mobile users than the other set, which can result in one set having a lower or higher rate regardless of the size of the button.

The best way to avoid such biases is to divide visitors by desktop and mobile users and then randomly assigning them to specific sets. This trick is known as blocking.

A/B Testing and Results: How to Interpret

This was a basic example. In the real world, you will not only check the size but other factors as well including the text, the position, and the color of the button.

A/B testing analysts are known to run sequential tests to compare different elements. They’ll first test the size of the button (small or large), then move to the color (red or blue), then the position (top or bottom), etc.

This helps them reach a version of the page that’s perfect. This is important because changing multiple factors at once can make it difficult to conclude what’s causing changes in the behavior (i.e.: the number of clicks).

However, we now have A/B testing tools that can handle complex tests.

“With A/B testing, we tend to want to run a large number of simultaneous, independent tests, in large part because the mind reels at the number of possible combinations you can test,” Fung says.

“Using mathematics, you can smartly pick and run only certain subsets of those treatments; then you can infer the rest from the data,” he suggests.

This trick is known as “multivariate” testing. It’s a form of A/B testing. It means running not just an A/B test but an A/B/C test and so on.

A/B Testing and Results: How to Interpret

Most marketers and analytics experts use different split testing tools to perform such tests. You will find many AB testing software out there but not all may be suitable for you.

You must know how to do A/B testing so you can interpret results. Remember that the right tool depends on what you wish to test.

For example, Adoric can handle a variety of tasks including A/B testing.

Adoric is a complete software that can help you run, manage, and analyze campaigns so you can identify the best one and use your resources in the right manner.

The main purpose of A/B testing is to increase conversions. You can do so by changing a variety of elements such as the size of font, the text, and the use of images. You can also use it to test website design elements and other such features.

Adoric mainly concentrates on pop-ups, a marketing tool that can offer a conversion rate of 11% if used correctly. Our software can help you compare different pop-up designs and options to pick the right one.

Adoric is used by names like P&G, PMI, and Toyota. Trust a name that brands you love trust.

You need to look for software that does not only provide numbers but also explain what they mean. Otherwise, you will have to hire an A/B tester or statistician to interpret results.

There are both paid and free split testing software; however, we suggest that you go for a paid version as they are more detailed and easier to use. Such software typically present conversion rates or reports:

One for users who saw your typical page

The other for users who saw the test page

The report typically highlights several factors. Look for differences between important figures such as the number of clicks.

You may also see the following information:

- Control: 15 percent (+/- 2.2 percent)

- Variation 18 percent (+/- 1.9 percent)

This means that about 18 percent of your visitors or readers opened the email with your new subject line. The figure has a margin of error – 2.3 percent.

This doesn’t mean the actual rate is between 16.1 percent and 19.9 percent.

“The real interpretation is that if you ran your A/B test multiple times, 95 percent of the ranges will capture the true conversion rate — in other words, the conversion rate falls outside the margin of error 5 percent of the time (or whatever level of statistical significance you’ve set),” Fung explains.

If this is too hard to understand then know that you’re not the only one. Turn to a software that can present this information in a neat manner so that it’s easy for you to comprehend and use.

Based on this result, we can say that the new method is more effective as it’s causing more people to open an email. However, due to the margin of error, we cannot guarantee exactly how many people will open an email but based on the number it will be higher than the current open rate.

A/B Testing: Mistakes to Avoid

Here are some of the most common A/B testing mistakes. Make sure to avoid these:

Ending Tests Too Soon

It’s believed that about 57 percent of experimenters end A/B tests once it looks like their original hypothesis was proven. Known as p-hacking, it’s a form of inflation bias that’s considered ‘selective reporting’ and can result in poor results.

It’s important to let each test run its course even if you’re able to see results in real time.

Not Having a Decent Sample

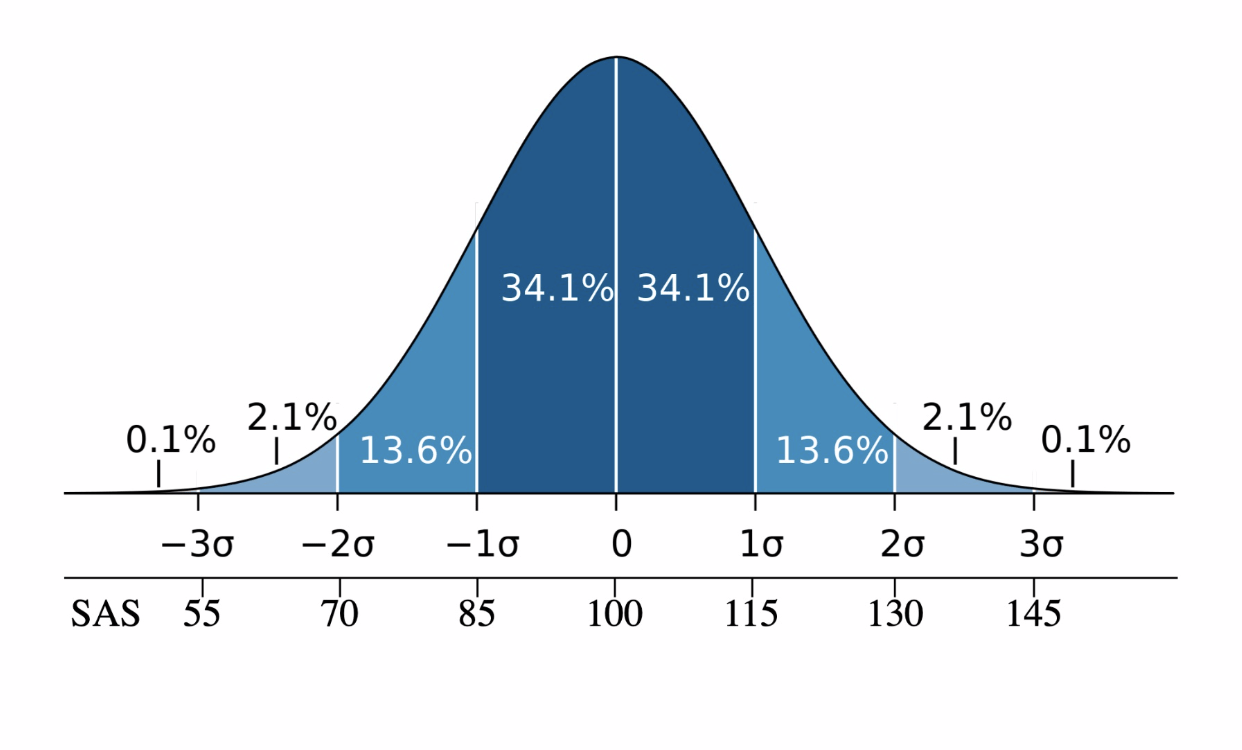

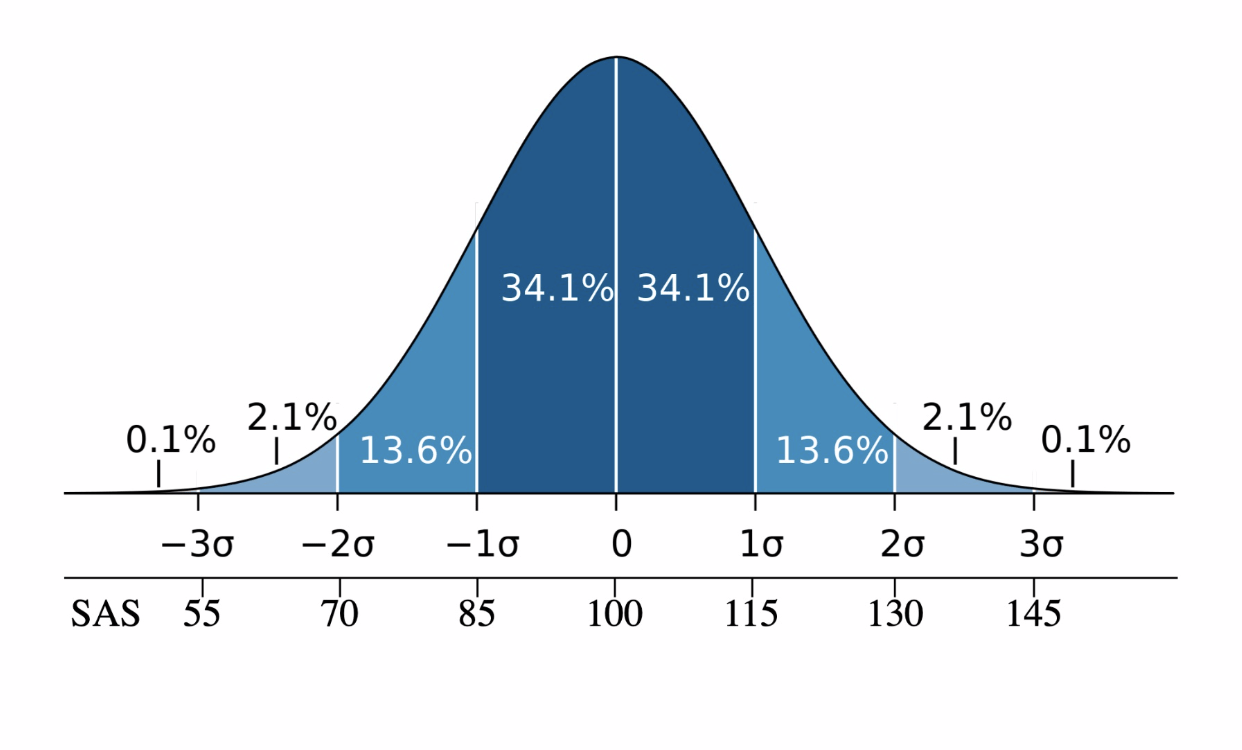

A/B testing needs about 25,000 visitors to reach a significant sample.

Sadly, most marketers use a smaller sample size, which is not a true representation of the total population, hence the result ends up being ‘unreliable’.

Little Retesting

Very few companies opt for retesting. Most test once and believe it. Research has proven that once may not be enough due to the risk of false positive.

Moreover, you should try every few months because things may change. For example, you may gain new visitors who might like a different color or size of the button.

You will never be able to find the right option without retesting.

Counting too Many Metrics

While complex tests are useful, they may not always be efficient. Looking at too many metrics at a time can result in “spurious correlations”.

Even if your software offers too many metrics, you must know which ones to concentrate on. This will help avoid random fluctuations and allow you to concentrate on figures that matter.

A/B Testing: Frequently Asked Questions

Do big companies use A/B testing?

Yes, they do. Google ran its first test in 2000 to determine the right number of results per page. The company still actively uses A/B testing and ran over 7,000 tests in 2011.

Other big names like Booking.com, Facebook, and Amazon also regularly conduct controlled experiments. Moreover, it’s also used in politics.

The Obama campaign raised an additional $75 million due to improved decision making credited to A/B marketing. It also increased donation conversions by around 79 percent.

How long do A/B tests last?

They can last from one hour to a week to a method depending on what you’re trying to test.

For example, a company testing a subscription model should try it for at least a month.

On the other hand, an email marketing test will give you results in 24-48 hours since more than 50 percent of people read work-related emails in about 24 hours only.

Who Needs A/B Testing?

Every online marketer or online business needs A/B testing to identify the right marketing technique.

It’s used to compare all elements that can affect the decision of your end buyer. You will see it being used in SEO, email marketing, web development, etc.

A/B Testing: Conclusion

In simple words, A/B testing is used to compare two options and find the one that offers better results. Do not let anything confuse you, try Adoric if you’re looking for a friendly A/B testing software and watch your profits grow.

![Top 25 Profitable Home-based Business Ideas to Try [2024]](https://adoric.com/blog/wp-content/uploads/2024/03/top-25-profitable-home-business-ideas.png)